OSM Performance Management

This documentation corresponds now to Release FIVE, previous documentation related to Performance Management has been deprecated.

Activating Metrics Collection

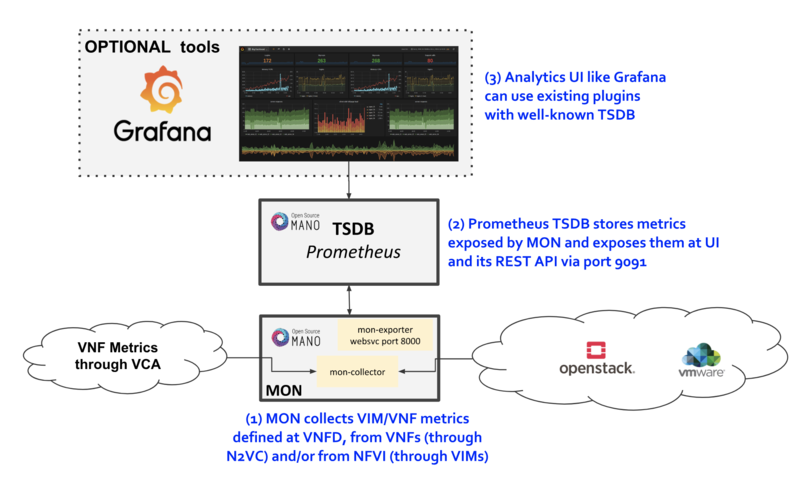

OSM MON features a "mon-collector" module which will collect metrics whenever specified at the descriptor level. For metrics to be collected, they have to exist first at any of these two levels:

- NFVI - made available by VIM's Telemetry System

- VNF - made available by OSM VCA (Juju Metrics)

Reference diagram:

VIM Metrics

For VIM metrics to be collected, your VIM should support a Telemetry system. As of Release 5.0.0, metric collection has been tested with OpenStack VIM with Keystone v3 authentication and legacy or Gnocchi-based telemetry services. Other VIM types will soon be added during the Release FIVE cycle.

Before activating metrics, it is important to make the MON's container aware of the granularity of your Telemetry system. For example, if it collects metrics every 60 seconds (like in Gnocchi's 'medium' archive policy), you would need to update MON's OS_DEFAULT_GRANULARITY environmental variable like this:

docker service update --env-add OS_DEFAULT_GRANULARITY=60 osm_mon

Next step is to activate metrics collection at your VNFDs. Every metric to be collected from the VIM for each VDU has to be described both at the VDU level, and then at the VNF level. For example:

vdu:

id: vdu1

...

monitoring-param:

- id: metric_vdu1_cpu

nfvi-metric: cpu_utilization

- id: metric_vdu1_memory

nfvi-metric: average_memory_utilization

...

monitoring-param:

- id: metric_vim_vnf1_cpu

name: metric_vim_vnf1_cpu

aggregation-type: AVERAGE

vdu-monitoring-param:

vdu-ref: vdu1

vdu-monitoring-param-ref: metric_vdu1_cpu

- id: metric_vim_vnf1_memory

name: metric_vim_vnf1_memory

aggregation-type: AVERAGE

vdu-monitoring-param:

vdu-ref: vdu1

vdu-monitoring-param-ref: metric_vdu1_memory

As you can see, a list of "NFVI metrics" is defined first at the VDU level, which contains an ID and the corresponding normalized metric name (in this case, "cpu_utilization" and "average_memory_utilization") Then, at the VNF level, a list of monitoring-params is referred, with an ID, name, aggregation-type and their source ('vdu-monitoring-param' in this case)

Additional notes:

- Available attributes and values can be directly explored at the OSM Information Model

- A complete VNFD example can be downloaded from here.

- Normalized metric names are: cpu_utilization, average_memory_utilization, disk_read_ops,disk_write_ops, disk_read_bytes, disk_write_bytes, packets_dropped_<nic number>, packets_received, packets_sent

VNF Metrics

Metrics can also be collected directly from VNFs using VCA, through the Juju Metrics framework. A simple charm containing a metrics.yaml file at its root folder specifies the metrics to be collected and the associated command.

For example, the following metrics.yaml file collects three metrics from the VNF, called 'users', 'load' and 'load_pct'

metrics:

users:

type: gauge

description: "# of users"

command: who|wc -l

load:

type: gauge

description: "5 minute load average"

command: cat /proc/loadavg |awk '{print $1}'

load_pct:

type: gauge

description: "1 minute load average percent"

command: cat /proc/loadavg | awk '{load_pct=$1*100.00} END {print load_pct}'

Please note that the granularity of this metric collection method is fixed to 5 minutes and cannot be changed at this point.

After metrics.yaml is available, there are two options for describing the metric collection in the VNFD:

1) VNF-level VNF metrics

mgmt-interface:

cp: vdu_mgmt # is important to set the mgmt VDU or CP for metrics collection

vnf-configuration:

initial-config-primitive:

...

juju:

charm: testmetrics

metrics:

- name: load

- name: load_pct

- name: users

...

monitoring-param:

- id: metric_vim_vnf1_load

name: metric_vim_vnf1_load

aggregation-type: AVERAGE

vnf-metric:

vnf-metric-name-ref: load

- id: metric_vim_vnf1_loadpct

name: metric_vim_vnf1_loadpct

aggregation-type: AVERAGE

vnf-metric:

vnf-metric-name-ref: load_pct

Additional notes:

- Available attributes and values can be directly explored at the OSM Information Model

- A complete VNFD example with VNF metrics collection (VNF-level) can be downloaded from here.

2) VDU-level VNF metrics

vdu:

- id: vdu1

...

interface:

- ...

mgmt-interface: true ! is important to set the mgmt interface for metrics collection

...

vdu-configuration:

initial-config-primitive:

...

juju:

charm: testmetrics

metrics:

- name: load

- name: load_pct

- name: users

...

monitoring-param:

- id: metric_vim_vnf1_load

name: metric_vim_vnf1_load

aggregation-type: AVERAGE

vdu-metric:

vdu-ref: vdu1

vdu-metric-name-ref: load

- id: metric_vim_vnf1_loadpct

name: metric_vim_vnf1_loadpct

aggregation-type: AVERAGE

vdu-metric:

vdu-ref: vdu1

vdu-metric-name-ref: load_pct

Additional notes:

- Available attributes and values can be directly explored at the OSM Information Model

- A complete VNFD example with VNF metrics collection (VDU-level) can be downloaded from here.

As in VIM metrics, a list of "metrics" is defined first either at the VNF or VDU "configuration" level, which contain a name that comes from the metrics.yaml file. Then, at the VNF level, a list of monitoring-params is referred, with an ID, name, aggregation-type and their source, which can be a "vdu-metric" or a "vnf-metric" in this case.

Retrieving metrics from Prometheus TSDB

Once the metrics are being collected, they are stored in the Prometheus Time-Series DB with an 'osm_' prefix, and there are a number of ways in which you can retrieve them.

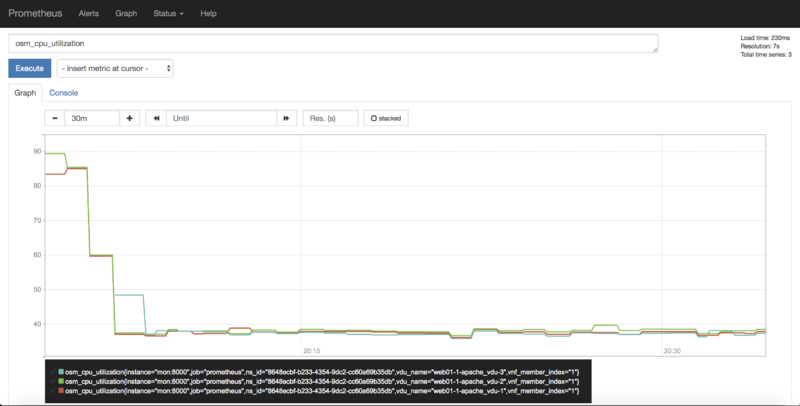

1) Visualizing metrics in Prometheus UI

Prometheus TSDB includes its own UI, which you can visit at http://[OSM_IP]:9091

From there, you can:

- Type any metric name (i.e. osm_cpu_utilization) in the 'expression' field and see its current value or a histogram.

- Visit the Status --> Target menu, to monitor the connection status between Prometheus and MON (through 'mon-exporter')

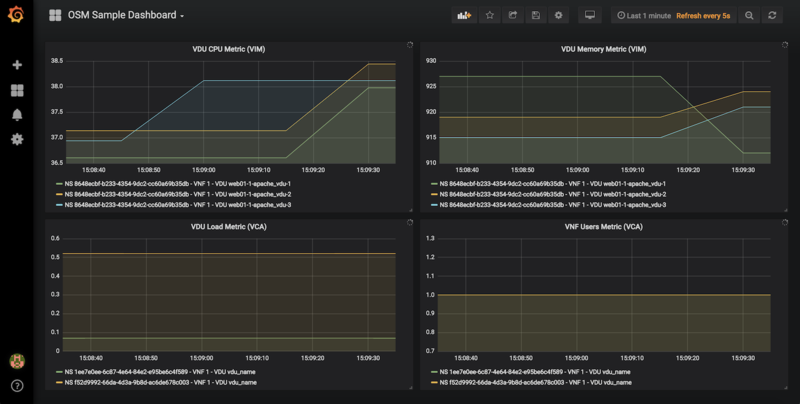

2) Integrating Prometheus with Grafana

There is a well-known integration between these two components that allows Grafana to easily show interactive graphs for any metric. You can use any existing Grafana installation or the one that comes in OSM as the experimental "pm_stack".

To install the 'pm_stack' (which today includes only Grafana), in an existing OSM installation, run the installer in the following way:

./install.sh -o pm_stack

Otherwise, if you are installing from scratch, you can simply run the installer with the pm_stack option.

./install.sh --pm_stack

Once installed, access Grafana with its default credentials (admin / admin) at http://[OSM_IP_address]:3000 and by clicking the 'Manage' option at the 'Dashboards' menu (to the left), you will find a sample dashboard containing two graphs for VIM metrics, and two graphs for VNF metrics. You can easily change them or add more, as desired.

3) Interacting with Prometheus directly through its API

Even though many analytics applications, like Grafana, include their own integration for Prometheus, some other applications do not include it out of the box or there is a need to build a custom integration.

In such cases, the Prometheus HTTP API can be used to gather any metrics. A couple of examples are shown below:

Example with Date range query

curl 'http://localhost:9091/api/v1/query_range?query=osm_cpu_utilization&start=2018-12-03T14:10:00.000Z&end=2018-12-03T14:20:00.000Z&step=15s'

Example with Instant query

curl 'http://localhost:9091/api/v1/query?query=osm_cpu_utilization&time=2018-12-03T14:14:00.000Z'

Further examples and API calls can be found at the Prometheus HTTP API documentation.

4) Interacting directly with MON Collector

The way Prometheus TSDB stores metrics is by querying Prometheus 'exporters' periodically, which are set as 'targets'. Exporters expose current metrics in a specific format that Prometheus can understand, more information can be found here

OSM MON features a "mon-exporter" module that exports current metrics through port 8000. Please note that this port is by default not being exposed outside the OSM docker's network.

A tool that understands Prometheus 'exporters' (for example, Elastic Metricbeat) can be plugged-in to integrate directly with "mon-exporter". To get an idea on how metrics look alike in this particular format, you could:

1. Get into MON console

docker exec -ti osm_mon.1.[id] bash

2. Install curl

apt -y install curl

3. Use curl to get the current metrics list

curl localhost:8000

Please note that as long as the Prometheus container is up, it will continue retrieving and storing metrics in addition to any other tool/DB you connect to "mon-exporter"

5) Using your own TSDB

OSM MON integrates Prometheus through a plugin/backend model, so if desired, other backends can be developed. If interested in contributing with such option, you can ask for details at our Slack #mon channel or through the OSM Tech mailing list.

Your feedback is most welcome! You can send us your comments and questions to OSM_TECH@list.etsi.org Or join the OpenSourceMANO Slack Workplace See hereafter some best practices to report issues on OSM