OpenVIM installation (Release One): Difference between revisions

Garciadeblas (talk | contribs) |

Garciadeblas (talk | contribs) |

||

| Line 30: | Line 30: | ||

=Installation= | =Installation= | ||

Openvim is installed using a script | Openvim (v1.0.1) is installed using a script: | ||

wget -O install-openvim.sh "https://osm.etsi.org/gitweb/?p=osm/openvim.git;a=blob_plain;f=scripts/install-openvim.sh;h=88a92dd42804b805269ab56d1350406e0ab93a4c;hb=refs/tags/v1.0.1" | wget -O install-openvim.sh "https://osm.etsi.org/gitweb/?p=osm/openvim.git;a=blob_plain;f=scripts/install-openvim.sh;h=88a92dd42804b805269ab56d1350406e0ab93a4c;hb=refs/tags/v1.0.1" | ||

chmod +x install-openvim.sh | chmod +x install-openvim.sh | ||

Revision as of 09:47, 16 November 2016

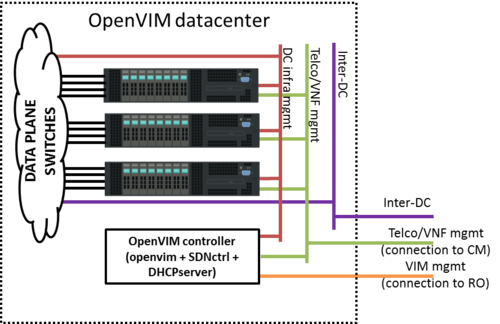

Infrastructure

In order to run openvim in normal mode (see bellow the available modes) and deploy dataplane VNFs, an appropriate infrastructure is required. Below a reference architecture for an openvim-based DC deployment.

Openvim needs to be accesible from Resource Orchestrator (openmano). Openvim needs:

- To make its API accesible from Resource Orchestrator (openmano). That's the purpose of the VIM mgmt network in the figure.

- To be connected to all compute servers through a network, the DC infrastructure network in the figure.

- To offer management IP addresses to VNFs for VNF configuration from CM (Juju server). That's the purpose of the Telco/VNF management network.

Compute nodes, besides being connected to the DC infrastructure network, must also be connected to two additional networks:

- Telco/VNF management network, used by Configuration Manager (Juju Server) to configure the VNFs

- Inter-DC network, optionally required to interconnect this datacenter to other datacenters (e.g. in MWC'16 demo, to interconnect the two sites).

VMs will be connected to these two networks at deployment time if requested by openmano.

VM creation (openvim server)

- Requirements:

- 1 vCPU (2 recommended)

- 4 GB RAM (4 GB are required to run OpenDaylight controller; if the ODL controller runs outside the VM, 2 GB RAM are enough)

- 40 GB disk

- 3 network interfaces to:

- OSM network (to interact with RO)

- DC intfrastructure network (to interact with the compute servers and switches)

- Telco/VNF management network (to provide IP addresses via DHCP to the VNFs)

- Base image: ubuntu-16.04-server-amd64

Installation

Openvim (v1.0.1) is installed using a script:

wget -O install-openvim.sh "https://osm.etsi.org/gitweb/?p=osm/openvim.git;a=blob_plain;f=scripts/install-openvim.sh;h=88a92dd42804b805269ab56d1350406e0ab93a4c;hb=refs/tags/v1.0.1" chmod +x install-openvim.sh sudo ./install-openvim.sh [-u <database-admin-user>] [-p <database-admin-password>] #NOTE: you can provide optionally the admin user (normally 'root') and password of the database.

On Ubuntu 16.04 it will install openvim as a service on /opt/openvim

Openflow controller

For normal or OF only openvim modes you will need a openflow controller. You can install e.g. floodlight-0.90. The script openvim/scripts/install-floodlight.sh makes this steps for you. And the script service-floodlight can be used to start/stop it in a screen with logs.

DHCP server

For the management network a DHCP server is needed. Install the package dhcp3-server. This package will install the actual DHCP server based on isc-dhcp-server package.

Ubuntu 14.04: sudo apt-get install dhcp3-server Ubuntu 16.04: sudo apt install isc-dhcp-server

Configure it editing file /etc/default/isc-dhcp-server to enable DHCP server in the appropriate interface, the one attached to Telco/VNF management network (e.g. eth1).

$ sudo vi /etc/default/isc-dhcp-server INTERFACES="eth1"

Edit file /etc/dhcp/dhcpd.conf to specify the subnet, netmask and range of IP addresses to be offered by the server.

$ sudo vi /etc/dhcp/dhcpd.conf

ddns-update-style none;

default-lease-time 86400;

max-lease-time 86400;

log-facility local7;

option subnet-mask 255.255.0.0;

option broadcast-address 10.210.255.255;

subnet 10.210.0.0 netmask 255.255.0.0 {

range 10.210.1.2 10.210.1.254;

}

Restart the service:

sudo service isc-dhcp-server restart

Configuration

- Go to openvim folder (/opt/openvim) and edit openvimd.cfg. Note: by default it runs in mode: test where no real hosts neither openflow controller are needed. You can uses other modes:

| mode | Computes hosts | Openflow controller | Observations |

|---|---|---|---|

| test | fake | X | No real deployment. Just for API test |

| normal | needed | needed | Normal behaviour |

| host only | needed | X | No PT/SRIOV connections |

| develop | needed | X | Force to cloud type deployment without EPA |

| OF only | fake | needed | To test openflow controller without needed of compute hosts |

Service must be restarted

sudo service openvim restart

NOTE: the following steps (ONLY if openvim runs in test mode) are done automatically by script:

/opt/openvim/scripts/initopenvim.sh --insert-bashrc --force

- Let's configure the openvim CLI client. Needed if you have changed the /opt/openvim/openvimd.cfg file (WARNING not the ./openvim/openvimd.cfg)

openvim config # show openvim related variables

#To change variables run

export OPENVIM_HOST=<http_host of openvimd.cfg>

export OPENVIM_PORT=<http_port of openvimd.cfg>

export OPENVIM_ADMIN_PORT=<http_admin_port of openvimd.cfg>

#You can insert at .bashrc for authomatic loading at login:

echo "export OPENVIM_HOST=<...>" >> /{HOME}/.bashrc

...

Adding compute nodes

- Let's attach compute nodes

In test mode we need to provide fake compute nodes with all the necessary information:

openvim host-add /opt/openvim/test/hosts/host-example0.yaml openvim host-add /opt/openvim/test/hosts/host-example1.yaml openvim host-add /opt/openvim/test/hosts/host-example2.yaml openvim host-add /opt/openvim/test/hosts/host-example3.yaml openvim host-list #-v,-vv,-vvv for verbosity levels

In normal or host only mode, the process is a bit more complex. First, you need to configure appropriately the host following these Compute node configuration. The current process is manual, although we are working on an automated process. For the moment, follow these instructions:

#copy /opt/openvim/scripts/host-add.sh and run at compute host for gather all the information ./host_add.sh <user> <ip_name> >> host.yaml

#NOTE: If the host contains interfaces connected to the openflow switch for dataplane, # the switch port where the interfaces are connected must be provided manually, # otherwise these interfaces cannot be used. Follow one of two methods: # 1) Fill openvim/database_utils/of_ports_pci_correspondence.sql ... # ... and load with mysql -uvim -p vim_db < openvim/database_utils/of_ports_pci_correspondence.sql # 2) or add manually this information at generated host.yaml with a 'switch_port: <whatever>' # ... entry at 'host-data':'numas': 'interfaces'

# copy this generated file host.yaml to the openvim server, and add the compute host with the command: openvim host-add host.yaml

# copy openvim ssh key to the compute node. If openvim user didn't have a ssh key generate it using ssh-keygen

ssh-copy-id <compute node user>@<IP address of the compute node>

Note: It must be noted that Openvim has been tested with servers based on Xeon E5 Intel processors with Ivy Bridge architecture. No tests have been carried out with Intel Core i3, i5 and i7 families, so there are no guarantees that the integration will be seamless.

Adding external networks

- Let's list the external networks:

openvim net-list

- Let's create some external networks in openvim. These networks are public and can be used by any VNF. It must be noticed that these networks must be pre-provisioned in the compute nodes in order to be effectively used by the VNFs. The pre-provision will be skipped since we are in test mode. Four networks will be created:

- default -> default NAT network provided by libvirt. By creating this network, VMs will be able to connect to default network in the same host where they are deployed.

- macvtap:em1 -> macvtap network associated to interface "em1". By creating this network, we allow VMs to connect to a macvtap interface of physical interface "em1" in the same host where they are deployed. If the interface naming scheme is different, use the appropriate name instead of "em1".

- bridge_net -> bridged network intended for VM-to-VM communication. The pre-provision of a Linux bridge in all compute nodes is described in Compute node configuration#pre-provision-of-linux-bridges. By creating this network, VMs will be able to connect to the Linux bridge "virbrMan1" in the same host where they are deployed. In that way, two VMs connected to "virbrMan1", no matter the host, will be able to talk each other.

- data_net -> external data network intended for VM-to-VM communication. By creating this network, VMs will be able to connect to a network element connected behind a physical port in the external switch.

In order to create external networks, use 'openvim net-create', specifying a file with the network information. Now we will create the 4 networks:

openvim net-create /opt/openvim/test/networks/net-example0.yaml openvim net-create /opt/openvim/test/networks/net-example1.yaml openvim net-create /opt/openvim/test/networks/net-example2.yaml openvim net-create /opt/openvim/test/networks/net-example3.yaml

- Let's list the external networks:

openvim net-list 2c386a58-e2b5-11e4-a3c9-52540032c4fa data_net 35671f9e-e2b4-11e4-a3c9-52540032c4fa default 79769aa2-e2b4-11e4-a3c9-52540032c4fa macvtap:em1 8f597eb6-e2b4-11e4-a3c9-52540032c4fa bridge_net

You can build your own networks using the template 'templates/network.yaml'. Alternatively, you can use 'openvim net-create' without a file and answer the questions:

openvim net-create

You can delete a network, e.g. "macvtap:em1", using the command:

openvim net-delete macvtap:em1

Creating a new tenant

- Now let's create a new tenant "admin":

$ openvim tenant-create --name admin --description admin <uuid> admin Created

- Take the uuid of the tenant and update the environment variables used by openvim client:

export OPENVIM_TENANT=<obtained uuid>

#echo "export OPENVIM_TENANT=<obtained uuid>" >> /home/${USER}/.bashrc

openvim config #show openvim env variables