OpenVIM installation to be used with OSM release 0: Difference between revisions

Garciadeblas (talk | contribs) |

Garciadeblas (talk | contribs) No edit summary |

||

| Line 2: | Line 2: | ||

=Infrastructure= | =Infrastructure= | ||

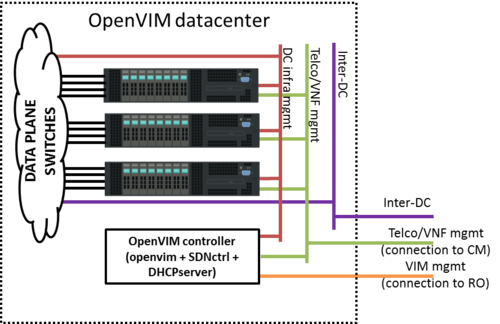

In order to run openvim and | In order to run openvim and deploy dataplane VNFs, an appropriate infrastructure is required. Details on the required infrastructure can be found [https://github.com/nfvlabs/openvim/wiki/Getting-started#requirements here]. Below a reference architecture for an openvim-based DC deployment. | ||

[[File:OpenvimDC.png|500px|Openvim Datacenter infrastructure]] | [[File:OpenvimDC.png|500px|Openvim Datacenter infrastructure]] | ||

Openvim needs to be accesible from Resource Orchestrator (openmano). | Openvim needs to be accesible from Resource Orchestrator (openmano). | ||

Openvim needs: | |||

* To make its API accesible from Resource Orchestrator (openmano). That's the purpose of the VIM mgmt network in the figure. | |||

* To be connected to all compute servers through a network, the DC infrastructure network in the figure. | |||

* To offer management IP addresses to VNFs for VNF configuration from CM (Juju server). That's the purpose of the Telco/VNF management network. | |||

Compute nodes, besides being connected to the DC infrastructure network, must also be connected to two additional networks: | |||

*Telco/VNF management network, used by Configuration Manager (Juju Server) to configure the VNFs | *Telco/VNF management network, used by Configuration Manager (Juju Server) to configure the VNFs | ||

*Inter-DC network, | *Inter-DC network, optionally required to interconnect this datacenter to other datacenters (e.g. in MWC'16 demo, to interconnect the two sites). | ||

VMs will be connected to these two networks at deployment time if requested by openmano. | VMs will be connected to these two networks at deployment time if requested by openmano. | ||

| Line 17: | Line 21: | ||

* Requirements: | * Requirements: | ||

** 1 vCPU (2 recommended) | ** 1 vCPU (2 recommended) | ||

** | ** 4 GB RAM (4 GB are required to run OpenDaylight controller; if the ODL controller runs outside the VM, 2 GB RAM are enough) | ||

** | ** 40 GB disk | ||

** 3 network interfaces to: | ** 3 network interfaces to: | ||

*** OSM network (to interact with RO) | *** OSM network (to interact with RO) | ||

| Line 26: | Line 30: | ||

=Installation= | =Installation= | ||

Installation process is detailed in [https://github.com/nfvlabs/openvim/wiki/Getting-started#installation-endusers Github openvim repo]. This process | Installation process is detailed in [https://github.com/nfvlabs/openvim/wiki/Getting-started#installation-endusers Github openvim repo]. This process must be followed to install required packages, openvim SW, an Openflow controller (either OpenDaylight or Floodlight) to control the underlay switch, and a DHCP server (isc-dhcp-server) to assign mgmt IP addresses to VNFs. | ||

=Configuration= | =Configuration= | ||

Configuration details can be found [https://github.com/nfvlabs/openvim/wiki/Getting-started#configuration here]. For readers' convenience, it is also shown below: | Configuration details can be found [https://github.com/nfvlabs/openvim/wiki/Getting-started#configuration here]. For readers' convenience, it is also shown below: | ||

==Configuring | ==Configuring Openflow Controller== | ||

===OpenDaylight configuration=== | |||

===Floodlight configuration=== | |||

*Go to scripts folder and edit the file **flow.properties** setting the appropriate port values | *Go to scripts folder and edit the file **flow.properties** setting the appropriate port values | ||

*Start FloodLight | *Start FloodLight | ||

| Line 39: | Line 46: | ||

[Ctrl+a , d] # goes out of the screen (detaches the screen) | [Ctrl+a , d] # goes out of the screen (detaches the screen) | ||

less openvim/logs/openflow.0 | less openvim/logs/openflow.0 | ||

==Configuring DHCP server== | |||

==Openvim server configuration== | ==Openvim server configuration== | ||

Revision as of 10:39, 25 May 2016

Infrastructure

In order to run openvim and deploy dataplane VNFs, an appropriate infrastructure is required. Details on the required infrastructure can be found here. Below a reference architecture for an openvim-based DC deployment.

Openvim needs to be accesible from Resource Orchestrator (openmano). Openvim needs:

- To make its API accesible from Resource Orchestrator (openmano). That's the purpose of the VIM mgmt network in the figure.

- To be connected to all compute servers through a network, the DC infrastructure network in the figure.

- To offer management IP addresses to VNFs for VNF configuration from CM (Juju server). That's the purpose of the Telco/VNF management network.

Compute nodes, besides being connected to the DC infrastructure network, must also be connected to two additional networks:

- Telco/VNF management network, used by Configuration Manager (Juju Server) to configure the VNFs

- Inter-DC network, optionally required to interconnect this datacenter to other datacenters (e.g. in MWC'16 demo, to interconnect the two sites).

VMs will be connected to these two networks at deployment time if requested by openmano.

VM creation (openvim server)

- Requirements:

- 1 vCPU (2 recommended)

- 4 GB RAM (4 GB are required to run OpenDaylight controller; if the ODL controller runs outside the VM, 2 GB RAM are enough)

- 40 GB disk

- 3 network interfaces to:

- OSM network (to interact with RO)

- DC intfrastructure network (to interact with the compute servers and switches)

- Telco/VNF management network (to provide IP addresses via DHCP to the VNFs)

- Base image: ubuntu-14.04.4-server-amd64

Installation

Installation process is detailed in Github openvim repo. This process must be followed to install required packages, openvim SW, an Openflow controller (either OpenDaylight or Floodlight) to control the underlay switch, and a DHCP server (isc-dhcp-server) to assign mgmt IP addresses to VNFs.

Configuration

Configuration details can be found here. For readers' convenience, it is also shown below:

Configuring Openflow Controller

OpenDaylight configuration

Floodlight configuration

- Go to scripts folder and edit the file **flow.properties** setting the appropriate port values

- Start FloodLight

service-openvim floodlight start #it creates a screen with name "flow" and start on it the openflow controller screen -x flow # goes into floodlight screen [Ctrl+a , d] # goes out of the screen (detaches the screen) less openvim/logs/openflow.0

Configuring DHCP server

Openvim server configuration

- Go to openvim folder and edit openvimd.cfg. By default it runs in 'mode: **test**' where neither real hosts nor openflow controller are needed. You can change to 'mode **normal**' to use both hosts and openflow controller.

- Start openvim server

service-openmano openvim start #it creates a screen with name "vim" and starts inside the "./openvim/openvimd.py" program screen -x vim # goes into openvim screen [Ctrl+a , d] # goes out of the screen (detaches the screen) less openvim/logs/openvim.0

Openvim client configuration

- Show openvim CLI client environment variables

openvim config # show openvim related variables

- Change environment variables properly

#To change variables run

export OPENVIM_HOST=<http_host of openvimd.cfg>

export OPENVIM_PORT=<http_port of openvimd.cfg>

export OPENVIM_ADMIN_PORT=<http_admin_port of openvimd.cfg>

#You can insert at .bashrc for authomatic loading at login:

echo "export OPENVIM_HOST=<...>" >> /home/${USER}/.bashrc

...

Create a tenant in openvim to be used by OSM

- Create a tenant that will be used by OSM:

openvim tenant-create --name osm --description "Tenant for OSM"

- Take the uuid of the tenant and update the environment variables used by openvim client:

export OPENVIM_TENANT=<obtained uuid>

#echo "export OPENVIM_TENANT=<obtained uuid>" >> /home/${USER}/.bashrc

openvim config #show openvim env variables

Additional configuration

Finally, compute nodes as well as external networks are required to be added to openvim. Details on how to do it can be found here.